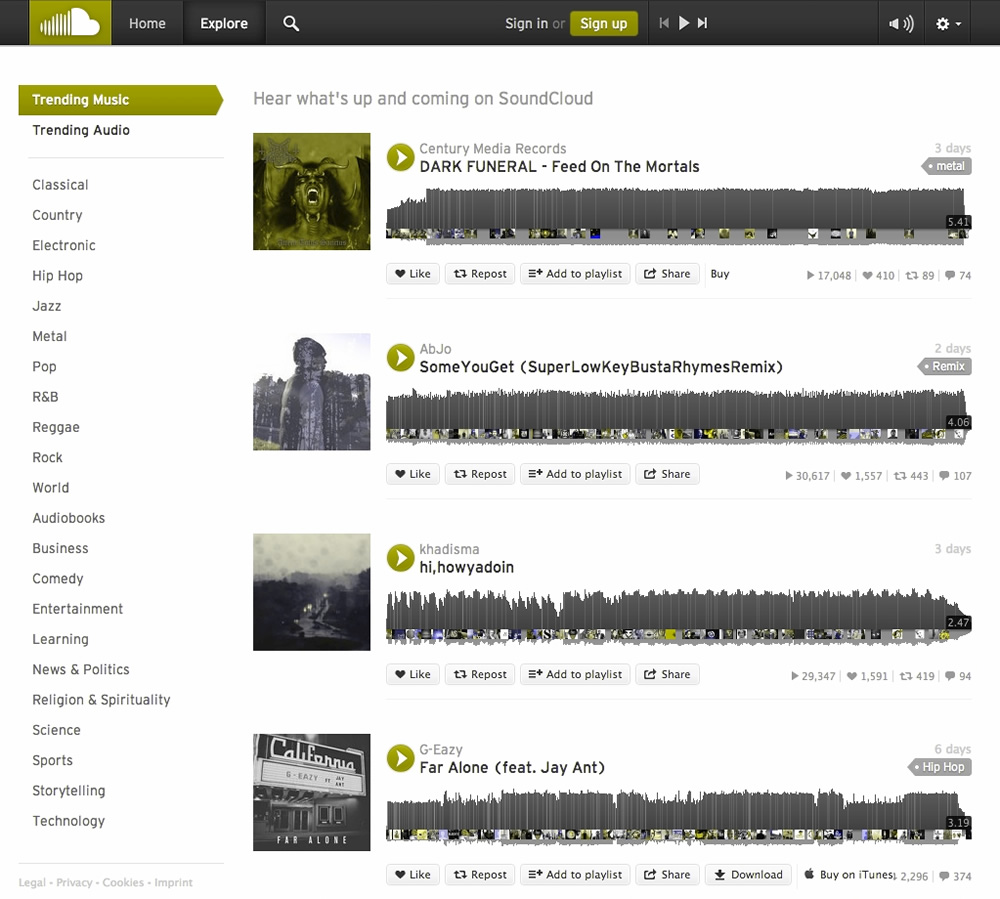

The target has two categories: YES (the customer responds) and NO (the customer does not respond). By doing so, it can be useful when the costs of different misclassifications vary significantly.įor example, suppose the problem is to predict whether a user is likely to respond to a promotional mailing. In a classification problem, it may be important to specify the costs involved in making an incorrect decision. If the Predictive Confidence is 0.5, then it indicates that the model has reduced the error of a naive model by 50 percent. If the Predictive Confidence is 1, then it indicates that the predictions are perfect. If the Predictive Confidence is 0, then it indicates that the predictions of the model are no better than the predictions made by using the naive model. Predictive Confidence = MAXX100Įrror of Model is (1 - Average Accuracy/100)Įrror of Naive Model is (Number of target classes - 1) / Number of target classes Predictive Confidence is defined by the following formula: The naive model always predicts the mean for numerical targets and the mode for categorical targets. Predictive Confidence indicates how much better the predictions made by the tested model are than predictions made by a naive model. For example, the Predictive Confidence of 59 means that the Predictive Confidence is 59 percent (0.59). Oracle Data Miner displays Predictive Confidence as a percentage. Predictive Confidence is a number between 0 and 1. Predictive Confidence provides an estimate of accurate the model is. If you tested the models using a Test node: To compare the test results for all models in the node, select Compare Test Results. The Classification Model Test View opens. To view the model, select the model that you are interested in. Right-click the node and select View Test Results. If you tested the models using the default test in the Classification node: To view test results, first test the model or models in the node: You can change the defaults using the preference setting.

Performance Matrix, also know as Confusion Matrix Performance measurements, Predictive Confidence, Average Accuracy, Overall Accuracy, and Cost By default, Oracle Data Miner calculates the following metrics for Classification models: Test settings specify the metrics to be calculated and control the calculation of the metrics. Test metrics assess how accurately the model predicts the known values. Oracle Data Miner provides test metrics for Classification models so that you can evaluate the model.ġ2.1.1 Test Metrics for Classification Models

By default, all Classification and Regression models are tested. The Test section define how tests are done. The second node that you connect is the source of the test data.īy deselecting Perform Test in the Test section of the Properties pane and using a Test node. The first data source that you connect to the build node is the source of the build data. 40 percent of the input data is used for test data.īy using all the build data as test data.īy attaching two Data Source nodes to the build node. The test data is created by randomly splitting the build data into two subsets. These are the ways to test Classification and Regression models:īy splitting the input data into build data and test data. The test data must be compatible with the data used to build the model and must be prepared in the same way that the build data was prepared. The historical data for a Classification project is typically divided into two data sets:

Classification models are tested by comparing the predicted values to known target values in a set of test data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed